When someone asks an AI search engine, “What’s the best CRM for a 50‑person sales team?”, the assistant doesn’t return a list of pages. It produces a single, consolidated answer: it selects a few tools, explains why they fit the situation, and presents the recommendation as if the decision were already made. The user scans it once and moves on. Your brand is either inside that answer, or it doesn’t exist in the decision.

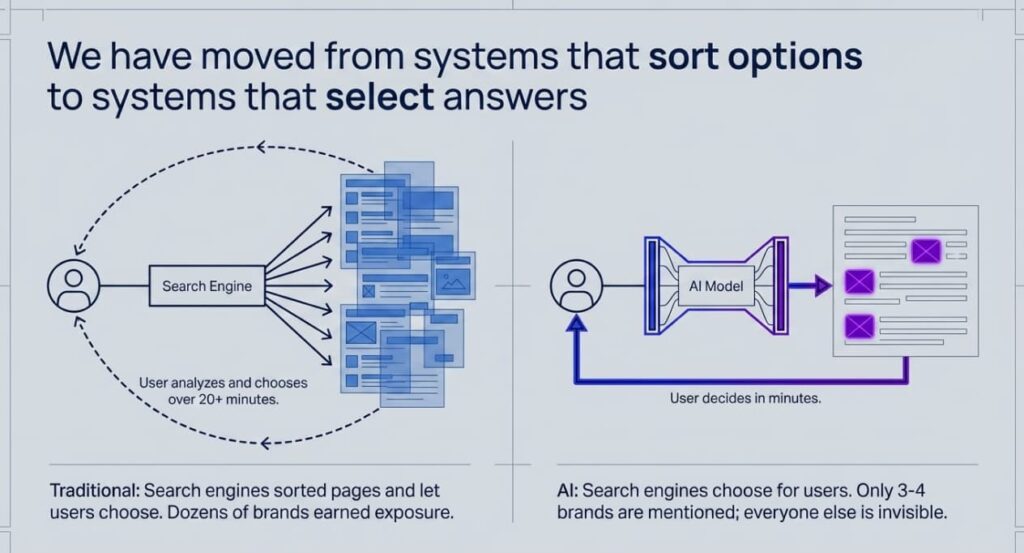

This is the shift from ranking to selection. Traditional search engines sorted pages and let users decide which results to open. AI search systems generate synthesized responses that effectively pre‑select brands for users, shrinking the range of options they ever see compared to a classic search results page.

In a 2024 note, Gartner projected that traditional search volume would drop by around 25% by 2026 as AI chatbots and virtual agents absorbed more queries. Around the same time, SparkToro’s 2024 analysis found that roughly 60% of Google searches ended without a click. Early evidence since then suggests that AI‑generated answers intensify this zero‑click behavior, although long‑term data for AI‑first interfaces is still limited.

Brands that align their positioning and content with how AI systems source and synthesize information are more likely to appear in generated answers. Those optimizing solely for traditional ranking factors may find their visibility limited in AI-mediated search contexts.

How the Discovery Experience Has Changed

The easiest way to grasp the shift is to compare the same buying decision across two eras.

| Aspect | Traditional Search (2023) | AI Search (2026) |

|---|---|---|

| User query | “best analytics platforms for SaaS” | “best analytics platforms for SaaS” |

| What appears | 10 blue links, 3 ads, featured snippet, Reddit thread | A synthesized paragraph naming 2-3 tools with reasoning |

| User behavior | Opens 4-5 tabs, reads comparison articles, spends 20+ minutes | Reads one answer, visits 1 site to confirm, decides in minutes |

| Brands exposed | Dozens across organic results, ads, and snippets | 3-4 mentioned in the answer; everyone else invisible |

| What earns visibility | Page-level SEO signals (keywords, links, authority) | Brand-level signals across the entire web (mentions, consistency, context) |

| Click behavior | 40-60% of searches produce a click | 93% of Google’s AI Mode searches produce zero clicks |

That last number deserves attention. Early data from Semrush, based on monitoring of Google’s experimental AI Mode interface, suggests zero-click rates in that environment may substantially exceed those of standard search. However, this figure derives from a specific tool’s methodology applied to a limited, non-representative sample during a beta period; extrapolation to broader search behavior would be premature. The user gets a complete answer. The websites behind that answer may never see the visit.

This means the competitive surface has collapsed. In traditional search, page-two results still got some traffic. In AI search, there is no page two. An AI assistant’s answer includes a limited set of brand mentions based on its training associations and retrieved sources, leaving many qualified alternatives unrepresented in the generated output.

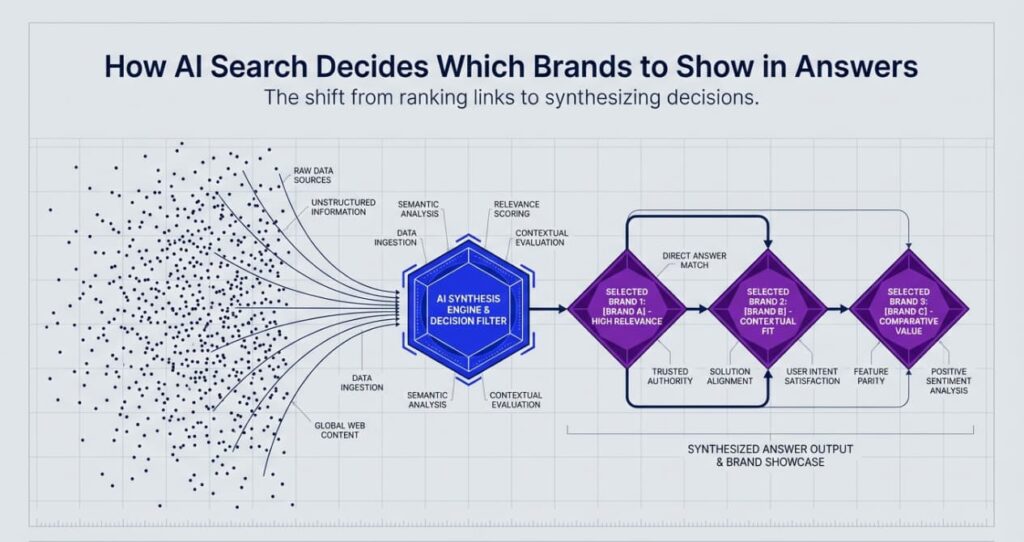

How AI Decides Which Brands to Name

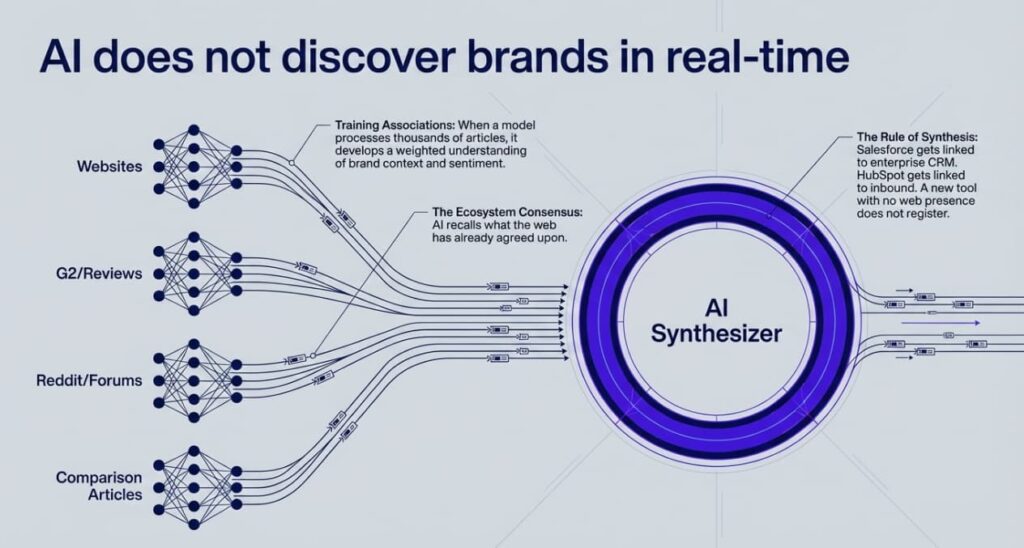

AI models build internal associations during training. When a model processes thousands of articles about CRM software, it learns which brands repeatedly show up in connection with specific problems, audiences, and outcomes. Salesforce gets linked to enterprise CRM. HubSpot gets linked to inbound marketing and mid‑market teams. A newer tool with limited web presence may not register at all.

The important point is that these are not page-level scores. The system doesn’t think in terms of “this page ranks for this keyword.” Instead, it builds associations like “this brand solves this problem for this type of customer.” When a buyer asks for a recommendation, it doesn’t rank links; it draws on the brands most strongly associated with that need and audience.

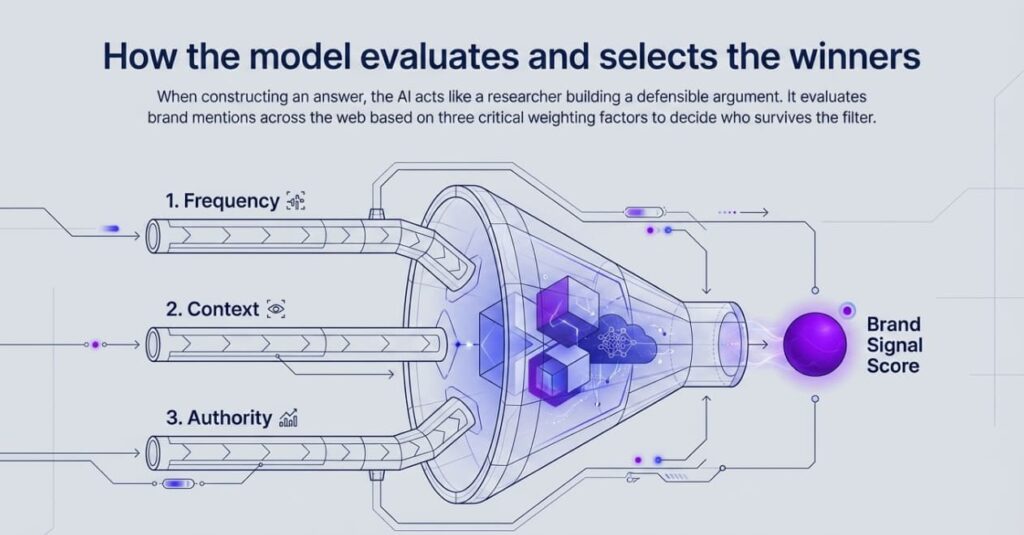

Three factors shape which brands an AI assistant selects when a user asks a question.

Frequency and consistency of mentions. A brand that appears across industry publications, review sites, comparison pages, and community forums with the same core description sends a strong, clean signal. A handful of scattered blog mentions with different positioning send a weak signal.

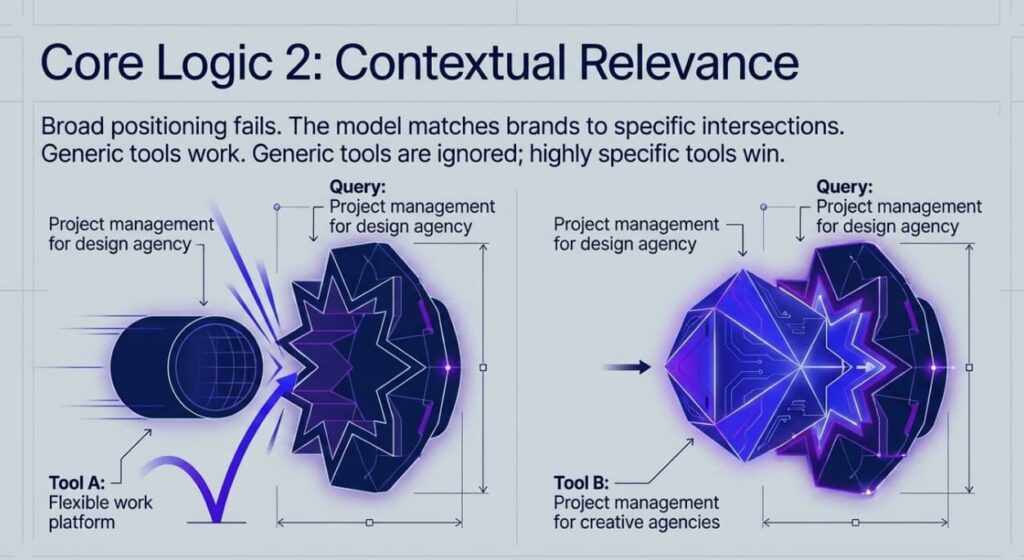

Contextual relevance. The AI system matches brands to the specific query. Someone asking about “email marketing for e-commerce” will see brands that the web consistently connects to that intersection. A company positioning itself broadly as “marketing software” has a weaker signal than one described everywhere as “email marketing built for online stores.”

Imagine two project management tools. Tool A calls itself “a flexible work platform” everywhere. Tool B consistently uses “project management for creative agencies.” When someone asks, “What project management tool should a design agency use?” the assistant has a much stronger reason to select Tool B. Over time, Tool B keeps appearing in AI answers for agency‑related queries and captures trials from buyers who never even see Tool A.

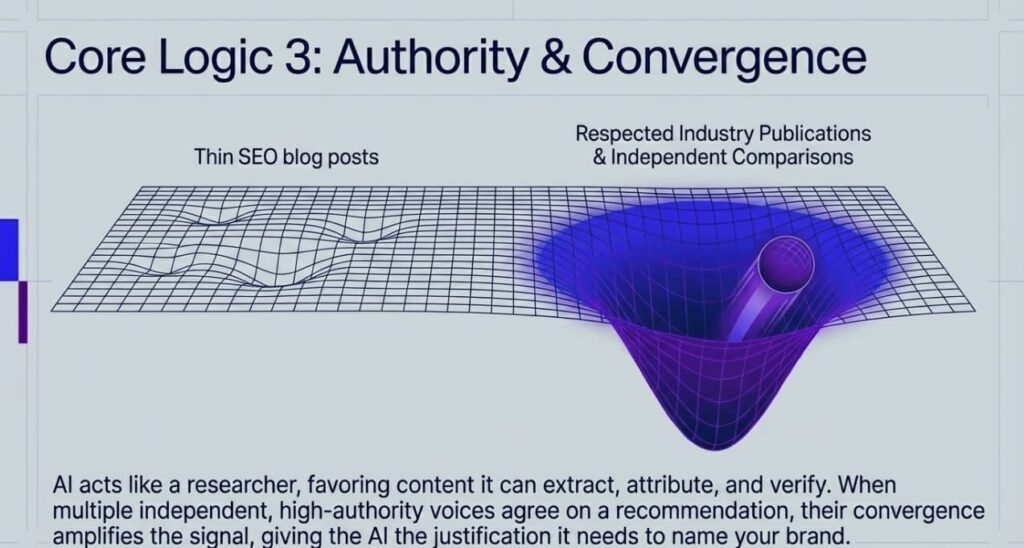

Source authority and convergence. A mention in a respected industry publication carries more weight than a thin SEO article. When multiple independent sources agree on a recommendation, that convergence amplifies the signal. AI systems learn which parts of the web are more reliable, much the way a researcher learns which journals to trust.

To understand why this happens, you need to look one level deeper. When you ask an AI assistant a question, it first retrieves relevant sources across the web. Then it builds an answer that it can justify using those sources, prioritizing content that is easy to extract and verify. This is why pages with comparison tables, concrete data points, and clearly stated conclusions outperform vague thought leadership: they give the model material it can extract, attribute, and weave into a defensible answer.

Here’s the counterintuitive part: Early analyses show there’s surprisingly little overlap between the sources AI assistants cite and the URLs that rank in Google’s top results. Only a small fraction of AI‑cited pages appear in the top 10. The data is still early and imperfect, but the pattern is clear enough: the pages AI models’ trust is often not the same as the pages traditional search engines rank highest.

This means your Google SEO strategy and your AI visibility strategy may require different content entirely.

Why Some Brands Disappear

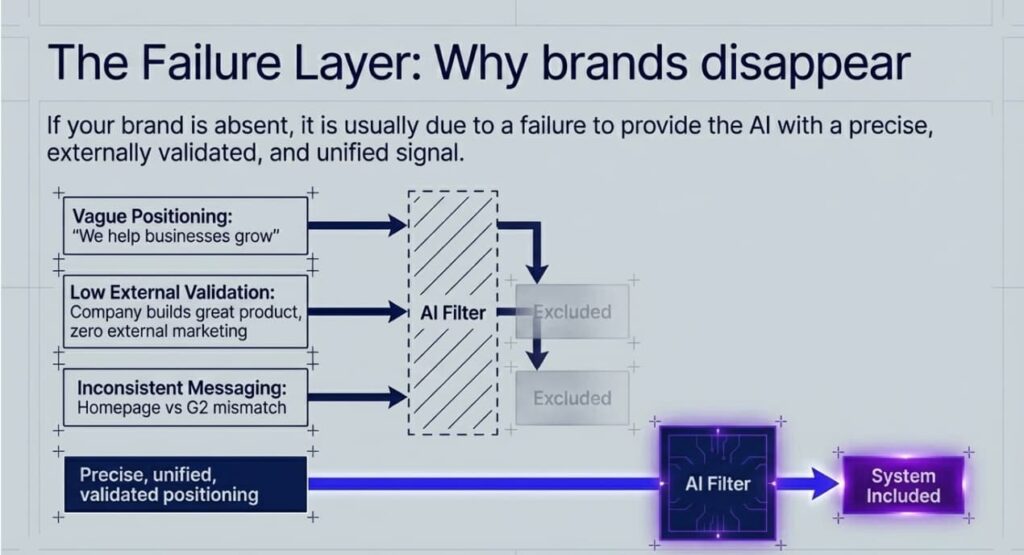

If your brand is absent from AI-generated answers in your category, the causes tend to fall into three patterns.

Vague positioning. “We help businesses grow” doesn’t give an AI system enough signal to connect you to any specific query. We automate accounts payable for mid-size manufacturers. The first version could describe ten thousand companies. The second creates a precise association that the AI system can retrieve.

Low external validation. Your website alone doesn’t determine your AI visibility. The model weighs what others say about you. Consider two SaaS companies launching in the same category. Company A invests in PR, earns reviews on G2, gets quoted in industry newsletters, and its founder writes on LinkedIn about the specific problem they solve. Company B builds a great product but does almost no external marketing. Six months later, the AI search begins to recommend Company A. Company B doesn’t appear. The product might be better, but the model doesn’t know that.

Inconsistent messaging. If your homepage says one thing, your G2 profile says another, and your founder describes the product differently on podcasts, the model receives conflicting signals. The brands that surface cleanly in AI answers present a unified narrative across every touchpoint.

Another way to look at this is through how tightly your brand is linked to specific problems. Some brands live in almost one‑to‑one territory: Stripe and online payments and Figma and interface design. The association is clear and constantly reinforced, so AI can retrieve it with confidence.

Most brands, however, sit in a messy many‑to‑many territory: dozens of vendors, dozens of overlapping categories. “Marketing software,” “productivity tools,” “security platforms.” In those broad labels, many subjects map to many objects. That is exactly the kind of relationship AI models struggle with. Unless you carve out a few sharp, repeated associations, “This brand is about this problem for this audience,” you stay in the statistical noise.

The psychology behind why people talk about certain brands and ignore others feeds directly into this dynamic. The triggers that drive organic word-of-mouth, things like social currency, emotional resonance, and practical value, are the same triggers that determine how often your brand gets mentioned across the web. Those mentions serve as the training data that AI models learn from. Understanding 6 Psychology Triggers That Make People Share Your Brand gives you a framework for generating the kind of distributed signal that AI models pick up on.

Most SEO Content Is Now Invisible to AI

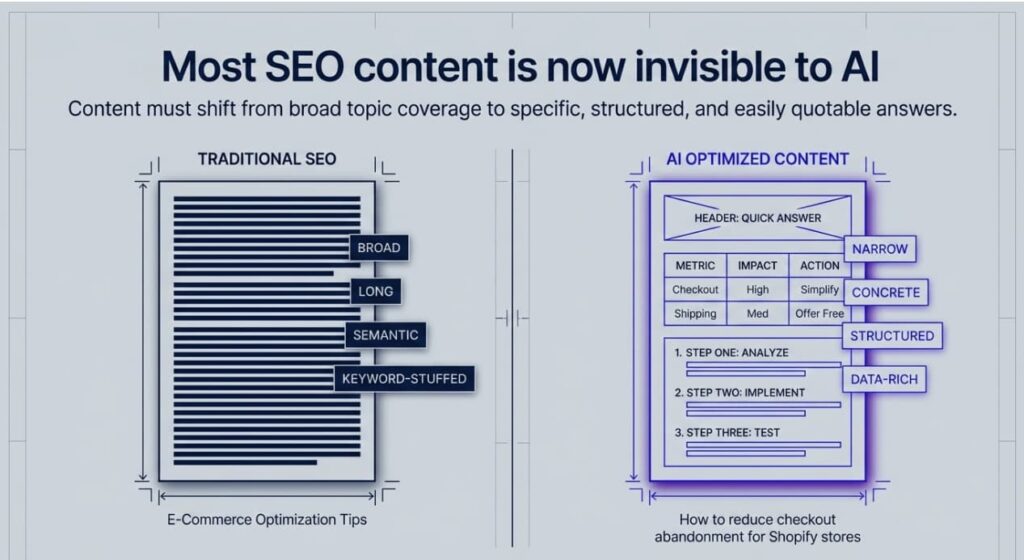

Here is the uncomfortable truth that most marketing teams haven’t confronted: the content you built for traditional SEO may contribute nothing to your AI visibility.

Citation patterns in AI‑generated answers appear highly concentrated: in many topic areas, a relatively small set of domains accounts for a large share of references. That concentration likely reflects a mix of authority signals and training data biases, but it also shows how selective these systems are. They tend to favor structured comparisons, clear data tables, and short, direct sentences over long‑form SEO content inflated to hit a target word count.

Reddit, Wikipedia, and YouTube are among the most-cited sources in Google’s AI Overviews. If your content strategy was built around ranking blog posts for informational keywords, you may discover that AI systems ignore those posts entirely and cite a Reddit thread or a competitor’s comparison page instead.

This forces a rethink of content investment. Content that earns AI citations tends to be specific, structured, data-rich, and answerable. A blog post titled “How to reduce checkout abandonment for Shopify stores” carries more AI weight than “E-Commerce Optimization Tips.” The difference between those two titles illustrates the difference between content built for AI selection and content built for traditional ranking.

The contrast in content philosophy is sharp. Content built for Google rankings tends to be broader, longer, and semantically rich, designed to cover a topic comprehensively and capture keyword variations. Content built for AI answers is narrower, more concrete, and structurally explicit: comparison tables, numbered steps, specific data points, and direct answers to specific questions. If your page can answer a query in a quotable sentence backed by a data table, an AI model can use it. If your page buries the answer in paragraph twelve of a 3,000-word guide, it probably won’t.

The Funnel Is Collapsing

AI search compresses the traditional awareness-consideration-decision funnel into a single interaction.

Under the old model, a marketing director searching for analytics might type “best analytics platforms 2026,” open five comparison articles, read vendor pages, start two free trials, sit through a demo, and decide over several weeks. Under AI search, the same person asks one specific question, gets a recommendation with reasoning, visits one site to confirm, and starts a trial that afternoon. The decision cycle shrinks from weeks to hours, and the brand that appears in the answer effectively wins by default, because the buyer never encounters most alternatives.

This changes what content needs to accomplish at every level. Awareness-stage blog posts may lose their function if AI systems answer those questions directly. Mid-funnel comparison content becomes more critical because AI models draw heavily on “versus” articles and review roundups when constructing recommendations. If your competitors appear in those comparisons and you don’t, the model associates the category with them.

Content that provokes laughter, surprise, or recognition still drives the external mentions that feed AI visibility. Content that gets shared broadly creates exactly the distributed signal AI models absorb. How Humor in Advertising Boosts Engagement covers why this works and how to apply it without undermining credibility. And if you’re trying to articulate real customer problems at the right level of specificity, Customer Pain in Marketing: 4 Levels That Drive Conversions provides a framework that maps directly to the language AI models can connect to queries.

What Brands Need to Do Differently

The shift from ranking to selection requires four changes.

1. Make your positioning AI-retrievable

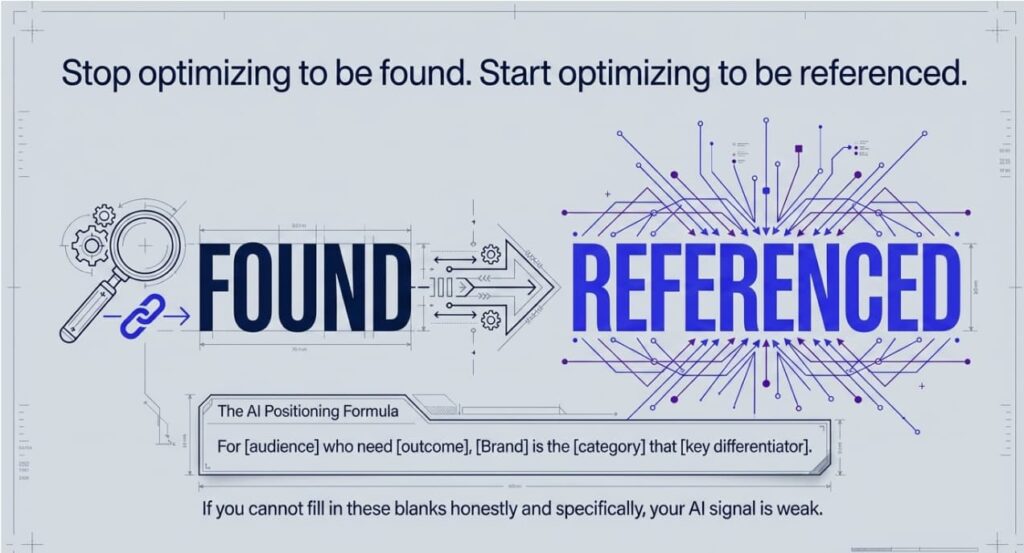

Generic language like “we help teams work better” gives models nothing to attach to real queries. You need a specific, fill‑in‑the‑blanks statement:

“For [audience] who need [outcome], [Brand] is the [category] that [key differentiator].”

Example: “For Shopify stores over $500K in annual revenue that need to reduce cart abandonment, CartRevive is the email automation tool that recovers 15–30% of lost sales through behavior‑triggered sequences.”

That positioning maps to dozens of concrete queries. “We help e‑commerce businesses grow” effectively maps to none.

2. Earn external validation

AI systems pay more attention to what other people say about your brand than to what you say about yourself. Focus on the places your buyers already trust: review platforms like G2 and Capterra, industry blogs, comparison articles, and niche communities. The goal isn’t to manufacture PR, but to do work and share ideas that are useful, clear, and opinionated enough that people choose to mention you on their own.

3. Structure content for citability

AI favors content it can easily extract, quote, and support with evidence. That means:

- Comparison tables, not just prose

- Numbered steps and procedures

- Direct answers in the first paragraph

- Clear category pages and consistent terminology

If your best answer lives in paragraph twelve of a 3,000‑word guide, the model will almost certainly ignore it.

4. Monitor across assistants, not just search

Brands regularly appear in ChatGPT and vanish in Claude, or vice versa. Each assistant uses different training data, retrieval layers, and grounding sources. At least once a month, test your visibility for core queries across ChatGPT, Perplexity, Google AI Mode, and Claude. Track which prompts surface your brand, which return only competitors, and where your description diverges from how you position yourself.

From Being Found to Being Referenced

The mental shift is simple: in the old system, you optimized for visibility. In the new system, you optimize for being referenced.

Being found was about how your content aligned with a search ranking algorithm. Being referenced is about how your brand fits into the broader information ecosystem: what the web collectively says about you, how consistently it says it, and how tightly that message maps to the questions buyers actually ask. In practical terms, you are shaping the associations the model stores between your brand, your category, and the problems you solve.

You can’t keyword‑stuff your way into an AI‑generated answer or hide behind content volume. You earn inclusion by becoming a brand that independent sources repeatedly describe as relevant, credible, and specific. And because today’s models are being trained on today’s web, the brands building clear, consistent signals now are effectively writing themselves into the systems that will shape buying decisions for years.

You can test where you stand in fifteen minutes. Write down ten queries your customers use to find solutions like yours, both problem-aware (“how to reduce churn in SaaS”) and solution-aware (“best churn prevention tools”). Type each into ChatGPT, Perplexity, and Google AI Mode. For each query, note which brands appear, how they’re described, and whether you show up at all. The gap between where you appear and where you don’t tells you what to fix first.

The shift is already visible. The only open question is how quickly your brand becomes part of the answers people trust.

FAQ

1. How do you measure whether your brand shows up in AI search?

Start with a simple visibility scan. List 10–15 queries your buyers use (both problem‑aware and solution‑aware) and run them in ChatGPT, Perplexity, Google AI Mode, and Claude. Note which brands appear, how they are described, and whether you show up at all. Repeat this monthly to see if your presence improves or slips.

2. What is the difference between SEO and AI visibility (GEO)?

Traditional SEO is about helping pages rank in search results for specific keywords. AI visibility (often called GEO, Generative Engine Optimization) is about helping assistants choose your brand inside a synthesized answer. It depends less on one page and more on consistent brand–topic associations across the entire web.

3. What type of content is most likely to be cited by AI assistants?

Assistants tend to favor structured, extraction‑friendly content: comparison tables, “X vs Y” pages, clear how‑tos, short direct answers backed by data, and up‑to‑date review or roundup pages. Long, generic guides that bury the answer deep in the article are much less likely to be quoted.

Nova Express Resources

- AI Tools for Marketers in 2026

- Nano Banana Pro: The Complete Guide for Marketers 2026

- NotebookLM for Marketers

- 7 Midjourney V7 Prompts for Marketing Ads, Products, and Social Media

- NotebookLM Infographic: The Complete Guide to Turning Your Data Into Visual Stories

- Customer Pain Points: Why Your Marketing Isn’t Working

Ready to engineer the system? Read the next article in this series:

- Why AI Cites Some Brands and Ignores Others: The Citation Stack Explained

- AEO vs SEO vs GEO: What’s the Difference and Where to Start in 2026

About the author

Serafima Osovitny is a marketing manager at Nova Express. Passionate about turning complex marketing tactics into simple, actionable guides, she shares insights about AI search visibility and generative engine optimization.

Explore her work at serafima.digital and follow her on X: @OSerafimaA

Leave a Comment